Benjamin Franklin may have been talking about house fires in his famous proverb, “an ounce of prevention is worth a pound of cure,” but like most truisms, its wisdom reaches beyond its original intent to keep colonial homes from burning to the ground.

Preventive measures, whether it is to not line your fireplaces with wood or to vaccinate against smallpox, shape the trajectory of history and disciplines—not just individual lives.

The field of nutrition abounds with preventive approaches that have altered the landscape of medicine, from changing the diseases we treat as healthcare providers to changing a patient’s physique.

In the early 20th century, foodborne illnesses due to contaminated water and food led to physicians seeing hundreds of patients with typhoid fever, botulism, and scarlet fever—until the invention and widespread adoption of pasteurization, refrigeration, and better sanitation across nations.

Rickets was rampant at the turn of the 20th century until vitamin D fortification of milk began in the 1930s. Although rickets is making a comeback here in the 21st century, when was the last time you saw a rachitic rosary? This physical manifestation of micronutrient deficiency was commonplace 100 years ago.

Today, prevention in medicine and particularly nutrition more often focuses on chronic diseases. As 21stcentury health care providers, we know research shows that lifestyle habits play a huge role in contributing to or preventing several leading causes of death in the U.S.: cardiovascular disease, cancer, obesity, and diabetes. At this moment in history, however, we have an additional exigence compelling us to act: a national financial crisis born out of paying for the treatment of diseases that could have been prevented.

The U.S. is in a Nutrition Crisis

On September 28, 2022, for only the second time in history, the White House held a Conference on Hunger, Nutrition, and Health to increase public awareness of and propose solutions to end hunger, advance nutrition, and reduce the harms of diet-related conditions in the U.S.. The first landmark White House Conference on Hunger, held in 1969, launched or enhanced many of the federal food assistance programs we know today: the Food Stamp Program (now known as the Supplemental Nutrition Assistance Program or SNAP), the School Lunch Program, and the Special Supplemental Nutrition Program for Women, Infants, and Children (WIC).

These programs helped reduce but did not eliminate food insecurity nationwide. Over 50 years later, there still remains a terrible imbalance between what we should be eating and what we are eating. What happened?

Spiraling Healthcare Costs

If you want to find common ground with people of different political viewpoints in the midst of a heated discussion, just mention that healthcare costs are out of control. Suddenly, everyone agrees.

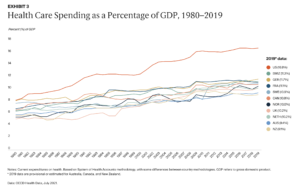

Healthcare costs rise faster than inflation, every year representing an increasingly larger portion of the U.S. economy. In 2020, spending on healthcare was 19.7% of the gross domestic product (GDP) in the United States. One of every five dollars spent in our economy is spent on healthcare. Compared to other high-income countries such as Norway, Australia, and Germany, we spend far more of our GDP on healthcare with poorer patient outcomes.

Each year, the U.S. government, through Medicaid and Medicare, spends hundreds of billions of dollars on healthcare expenses for diet-related conditions. Over a trillion dollars are spent on treatments that might be unnecessary if the diet of the average U.S. citizen were well-rounded, adequate in nutrients, with the right number of calories—enough to maintain a healthy weight, account for physical activity, and to support growth in children.

Costs related to obesity and diabetes, two of the most common chronic health conditions in the U.S. with established nutrition components to their prevention and treatment, are similarly outrageous. One-third of Medicare’s costs are for patients with diabetes. The U.S. government is paying for the bulk of the consequences of diet-related diseases in our seniors, shouldering through Medicare the economic costs related to diabetes and obesity, which exceed $500 billion per year.

These expenditures are suffocating the U.S. economy and worsening the disparities that already exist between different segments of the population. People may live side by side on Google maps but are worlds apart when it comes to the social determinants of health. We see the pressure of these widening disparities manifest in the patients we see in clinical settings. Yet how often do we as health care providers ask ourselves (much less our patients) are they economically stable or struggling to pay the bills? Is their water safe to drink? Does their neighborhood have a grocery store or a playground nearby? Are they experiencing racism, discrimination, or violence that prevents them from accessing basic resources such as healthy food and options for regular exercise?

And with training in nutrition and nutrition counseling widely recognized as inadequate among health care practitioners, Congress recently took action.

Buckling under the increase in the morbidity and mortality rate among Americans over the last three years and the mounting costs of care for chronic, preventable diseases, the U.S. House of Representatives passed H.R. 1118 this May. This resolution addresses the significant but currently inadequate role that our academic and medical institutions play in the perpetuation of these problems, however inadvertent.

Inadequate Education in Nutrition

The state of nutrition education in the U.S. is fair at best in health profession education programs. U.S. medical schools, on average, require only 19 hours of nutrition education across all 4 years of the curriculum if they cover nutrition at all. Residency programs have minimal or nonexistent educational standards related to nutrition, and no state requires continuing medical education in nutrition. Many physicians admit they do not feel confident and able to fully address the nutrition needs of their patients.

Many healthcare practitioners are unaware that Medicare funds most graduate medical education (GME) programs in this country, particularly those in primary care specialties. Medicare’s dual function of funding GME as well as medical care for seniors finally compelled Congress to act this spring: HR 1118 calls on medical schools, GME programs, and other health profession training programs to provide meaningful education on nutrition and diet.

While it might have taken a Congressional resolution to get the attention of the healthcare industry, the reality is that as healthcare practitioners and educators, we have the means to implement improved nutrition education now, without delay. This comes with the proviso that we recognize the scope and depth of the origins of the U.S.’ nutrition deficits, as they are much broader than individual patients, spanning history and industries. Indeed, they implicate us all as consumers, voters, and professionals. So, how exactly did we get here?

Why We Eat What We Eat: The Influence of Big Agriculture

At the turn of the 20th century, small family farms were part of the local economy, known and tied to their local communities. Since then, the number of people working in agriculture and the number of farms has decreased drastically. By the mid-1970s, industrial agriculture began to thrive in the U.S. and push out smaller producers, culminating in the loss of 190,000 farms between 2007 and 2021. To add insult to injury, many of the smaller farms that still exist are not profitable enough to allow their operators to make a living; most of their primary income comes from non-farm sources.

Despite the decrease in number of farms, the U.S. is producing more food than ever before. Productivity has skyrocketed due to automation (think irrigation), biotechnology (think better seeds), and synthetic chemicals (think pesticides). With fewer farms producing greater volume, large-scale production became the norm. Monocultures are common and a single crop is grown for the entire planting season. While this practice simplifies planting and harvesting, resulting in higher yields and therefore profits for the farmer (and presumably lower costs to the consumer), it is significantly more detrimental to the environment. Single crops attract more pests, require higher levels of pesticides, deplete soil nutrients and microorganisms, require more water and fertilizers, impair biodiversity, and hurt pollinating insects.

In addition, much of the “food” produced on U.S. farms goes to feed livestock or for biofuel. Corn makes up a third of all crop cash receipts and covers more acres of land than any other crop. Corn and soybeans combined are responsible for half of all crop revenues. However, the majority of both these U.S. grown crops is destined to feed livestock, though corn is also used for industrial products like starch, oil, sweeteners, and fuel. Very little sweet corn (an entirely different plant) makes it onto our plates.

Government subsidies provide nearly 40% of farm income overall, and because farm subsidies are roughly aligned with farm output, those with larger production benefit most. Small farmers therefore get small payments, which encourages large agricultural companies to produce even more.

The result is an unsustainable agriculture system that subsidizes an industry that puts more meat on our plates rather than grows the plant-based produce needed to meet U.S. dietary recommendations and improve our nation’s health.

The Influence of Food Manufacturers

Following World War II, a construction boom in the U.S. pushed families to the suburbs. There, consumers drove to the grocery store and came home to increasingly sedentary lifestyles with their TV dinners. The wealth that many enjoyed during this period allowed food dollars to be spent on the burgeoning offerings in supermarkets – a ready supply of packaged and easy-to-prepare foods. The massive, modern industrial food system benefitted consumers by making food more available, generally safer, and at lower cost; however, it made it harder for consumers to tell what was in the foods they ate, as package content was many steps removed from the backyard garden.

The processing of the late 20th century gave way to the ultra-processing of the 21st century. Ultra-processed foods are made with manufactured ingredients that emulsify, bulk, color, bleach, preserve, or flavor. They contain little or no whole food yet account for half of all calories consumed in the U.S.. Ultra-processed foods like pizza, sugary beverages, packaged snacks, cookies, and breakfast cereals are among the most profitable for food manufacturers. Billions of dollars in advertising budgets ensure company sales and that stockholders stay happy. Companies employ psychological principles to manipulate people into buying less nutritious foods, making them tasty and convenient, while also targeting children directly, including those living in under-resourced communities.

Emerging research on ultra-processed foods shows association with measures of poor health, including mental health. When study subjects have unlimited access to ultra-processed foods, they gain more weight than those offered unlimited unprocessed foods.

As if these nutrition deficits were not enough, the most painful paradox is that in this country where approximately 70% of the population has a BMI in the overweight or obese range, food insecurity and shortages still exist for many people.

Food insecurity means that a household is uncertain about acquiring or unable to secure enough food to meet the needs of all their members because they have insufficient money or other resources. In 2020, 10.5 % of U.S. households were food insecure during some part of the year. There are clearly disparities in who has access to food and who does not.

The term “food desert” describes lack of food availability in some neighborhoods. In a food desert, a person would have to take the bus, subway, or other transportation to get to a grocery store. Food deserts are found in predominantly poor and minority communities, both urban and rural, and their presence is associated with poorer health outcomes.

The Impact of COVID-19 on the Food and Fitness Landscape

In early 2020, the world changed almost overnight. We desperately tried to come to grips with how to find or make a mask, how to juggle online school for our children or ourselves, how to work remotely, and how to live without the loved ones we lost. Among the difficult “how tos” of 2020 was one that cut to the core of human survival: how to feed ourselves and our families.

In its early days in the U.S., the COVID-19 pandemic caused a run not just on hand sanitizer, cleaning products, and toilet paper, but also on groceries of all kinds: breakfast cereal, canned vegetables, dried beans and grains. Consumers stocked up, unsure of how often they wanted to risk exposure by in-person shopping. Manufacturers began to streamline their products due to outbreaks of COVID at food processing plants and difficulties with harvesting and shipments during lockdown. Suddenly, we were living the shortages we were used to hearing about in other countries. Where had all our choices gone? Everything we thought we knew about what goes on a grocery store shelf and how it gets there was turned upside down.

The socioeconomic disparity between haves and have-nots widened. Consumers with a steady income and technological know-how switched to online shopping. Those of lower socioeconomic status, already more likely to be front line workers risking their own and their families’ health, often had no other option but to continue in-person shopping. Elderly and other at-risk shoppers set alarms for 12:01 AM to try to schedule a favorable pickup window at the neighborhood superstore.

In many locations, restaurants were closed for extended periods, forcing people to eat at home more, a potentially healthy switch under any other circumstance. Some people embraced the chance to cook more and improve their diets. Others buckled under the additional stressors of caring for children and elders, throwing out all rules they had regarding snacking and mealtimes as a survival mechanism. Disordered eating behaviors skyrocketed while opportunities for treatment dropped.

As people experienced job losses, reduced wages, and higher expenses, the pandemic exacerbated food insecurity. When schools closed, many children in need who relied on the National School Breakfast or Lunch Programs went without, despite waivers that enabled parents or guardians to pick up meals. Parents most likely to have the transportation needed and the job flexibility to pick up food during the day were least likely to need the help.

Yet despite pandemic inflation causing hardship to consumers’ wallets, the food and agribusiness industries made record profits while food prices soared in 2021.

Physical activity during the pandemic had similarly sudden and uneven impacts on peoples’ lifestyles. Higher income consumers created or expanded home gyms, causing shortages in kettlebells, resistance bands, dumbbells, and other exercise equipment. Bicycle and running shoe sales went up. Visits to state and national parks increased substantially. Exercise classes went virtual. People started walking their neighborhoods more. But for those living in resource-poor neighborhoods, the lack of green space often led to more sedentary lives.

Significantly, the changes for those with means haven’t lasted: two years later, many surveys show that overall people on average are less active than they were pre-pandemic. Average daily step counts are lower. Whatever gains toward a healthy lifestyle were made have not been sustained–or were never present across all the population.

Saying Goodbye to the Illusion of Individualism

At this point in the pandemic, the CDC is permitting U.S. citizens take an individualistic approach to its guidance. Assess and manage your own risk. Wear a mask if you want or need to. Make the choice to get vaccinated. There is little talk about revamping systems that could help keep everybody healthier, such as better building ventilation to increase air changes per hour in shared spaces—practices that operate in the background that could decrease risk of COVID without individuals having to do anything.

These hands-off policies have strong parallels to nutrition—the belief that poor eating habits are entirely the patient’s fault (or the caregiver’s, in the case of young children), while ignoring the broad systems at play in the background that influence what we eat and how we move. Healthcare practitioners need to understand how these systems interact with and impact our patients if we are to help them—and ourselves—navigate the many uncertainties of the upcoming decade.

The consumer is not really in the driver’s seat. Manufacturers continue to process foods in such a way that they are driving consumers’ behavior. Sophisticated marketing strategy, coupled with the 24/7 availability of foods that are hazardous to health, are manipulating our brains, leading to chronic overconsumption. Children spend many of their waking hours in childcare or school, subject to whatever foods are provided to them. College cafeterias simultaneously offer contracts with vendors to offer fast food in dining halls and campus centers and “pouring rights,” allowing sugary beverage companies to push their products on students. The mistaken idea that obesity is due to a lack of willpower or a moral failing leads to dangerous bias, discrimination, and the understandable reluctance of patients to seek out medical treatment for obesity.

There is a similarly overarching belief that physical activity habits are an individual problem. But again–how often when we are counseling a patient to increase activity do we ask, “Are there safe places to walk and exercise? Are there safe places for the children to play? What types of activities are available and accessible in your community?”

Bottom line, healthcare providers cannot ignore that medical science has been outmaneuvered by more powerful goliaths: the agricultural and processed food industries. This compels us to become even more tenacious stewards of the health of our patients, the nation, and ourselves.

Healthcare Providers as Lifelong Learners

As healthcare providers, we play instrumental roles in promoting proper nutrition and physical activity in our patients. Indeed, we wield significant power that can be used to educate patients and motivate them to adopt healthier life habits. The complexity of each patient’s circumstance, however, demands that we learn how to exercise that power effectively within the systems in which we live and operate as professionals and consumers. We must develop the skills to assess and, when needed, help improve or increase the available resources in each context if we are to have an enduring impact on patient outcomes and help them meet their nutrition and lifestyle goals.

Despite the overwhelming evidence that diet and other lifestyle factors are central to a patient’s health and well-being, you may be one of the many healthcare providers who received little formal training in nutrition throughout your medical education. You might be one of the 86% of residents who, in one survey, felt inadequately trained to provide nutrition counseling to their patients. Or among the 90% of physicians in another who want more opportunities to learn about the evidence behind dietary interventions.

If we are to fulfill our responsibilities to our patients and profession, we must address these knowledge and skill gaps by seeking out continuing education in nutrition. There is no substitute for being able to provide evidence-based nutrition guidance to our patients, no shortcut for learning the components of lifestyle medicine needed to prevent, treat, and even reverse chronic disease.

It is not enough to simply master the nutrition knowledge needed; it is crucial to fully appreciate and understand the many factors influencing patients’ eating habits. Not only the individual factors at play (taste preferences, income, health beliefs, cooking skills), but also sociocultural factors (social trends, culture), community factors (transportation, presence or absence of grocery stores and markets, school or workplace cafeterias and vending machines), commercial factors (Big Food and Big Agriculture), and government factors (agricultural subsidies and policies, food assistance programs and what they offer).

It is also crucial to have the knowledge of how one changes personal health behaviors and to master the patient-centered counseling and motivational interviewing techniques that will enhance success. A key practice skill for healthcare providers is being able to quickly and efficiently assess your patients’ main nutrition challenges, note problem areas, determine barriers that exist, and implement strategies to move patients toward a healthier diet that better meets their goals. This may require counseling around different eating patterns, explaining how to interpret a food label, or suggesting simple dietary changes that enable a patient to build on success.

Knowledge of the communities in which our patients live is also essential to better health outcomes. Can you locate an eating disorder treatment center that is accepting patients? Is there a support group nearby, and if not, is there an online one you can suggest? Where is the nearest Diabetes Prevention Program, paid for by Medicare for older people with prediabetes? For someone who doesn’t cook and needs to cut down on high-sodium convenience foods lacking in nutrients, can you point them to an online cooking class or reputable, healthy cook on TikTok? Finally, when the patient’s needs are beyond your expertise, your role is to arrange a referral to a Registered Dietitian Nutritionist (RDN) for in-depth assessment and medical nutrition therapy as needed.

We all exist in a system of concentric circles. The patient in front of you is the target of companies that market ultra-processed, low nutrient-density foods, companies that are in fact vulnerable, since they require both brand loyalty and an ever-expanding revenue stream. Patients are subject to the geography of the food supply. Economic, time, and educational constraints shape their food and activity choices. We all need to pay attention to and address the larger ecosystem, to educate, counsel and empathize, and to act to reduce stigma rather than blame a person’s condition on poor choices or lack of willpower.

The Role of Physicians as Advocates

Few are in a better position to move the needle on the U.S.’ nutrition deficit than physicians. Physicians are not just medical professionals, they are seen as leaders and imbued with a public trust to solve our leading healthcare problems. They are also counselors, teachers, and advocates.

As advocates, those of you who are physicians are challenged to take actions that support social, economic, educational, and political changes—all with the aspirational goals of lessening suffering, improving health and fostering well-being. Advocacy at all levels of our complex healthcare and surrounding geopolitical, industrial, and socioeconomic systems is important.

So what can you do? Physicians can make an impact at every level. At the clinic level, you can develop group visits to educate patients about nutrition in a supportive environment. You can integrate nutritional status as a “vital sign” in all routine patient contacts. You can ask simple, open-ended questions, such as, “what might prevent you from preparing more healthful meals at home?” or “Have you ever had trouble getting enough food for your family or yourself to eat?” You can model advocacy for younger trainees.

At the community level, you can advocate for more direct bus lines to full-service supermarkets or farmer’s markets. You can write a letter to the editor supporting a new community center or expanding a food pantry. You can join with other clinicians to compel our medical center to hold a weekly farmer’s market on site. While taking action may seem daunting at first, meaningful change comes from small steps. Observing an increase in the number of homeless persons visiting local emergency rooms, physicians Cate and Erik Ranheim, of Madison, Wisconsin, established a preventive care “street medicine” program in 2009. They, then eventually their residents and other healthcare providers, partnered with local pantries to get healthy food options to homeless individuals with chronic conditions—literally meeting them where they were–to provide them with basic resources that could transform their lives and improve outcomes.

At the state or national level, you can write our elected officials about legislation that affects nutrition and health. You can make phone calls in support of candidates that place a priority on public health policies and programs that make strides to address health disparities. A single gesture can inspire others and increase engagement across the health professions and entire communities. These are not inherently political acts—but they are the result of advocacy—and their effects are tangible on the lives they touch.

As 2022 comes to a close, the U.S. is at a turning point. Congress and the White House are poised for a new effort to establish a foundation for a common, healthier lifestyle for its citizens. This is an opportunity for you as a healthcare provider to intervene in your clinics and communities, to use evidence-based nutritional knowledge and counseling to make a difference in peoples’ lives. Collectively, we can exercise our not inconsiderable power to reverse the negative lifestyle trends of the past, improve our nation’s health outcomes and lessen the stressors on the entire healthcare system.